Kandinsky 2.2: The Best Open-Source AI Image Model with a Permissive License

The team behind Kandinsky released their latest model 2 days ago: Kandinsky 2.2. Kandinsky is known for its creativity and artistic results but with the new update, it seems to be going more towards realism by default. It offers a higher base resolution (1024x1024), supports ControlNet, produces wider scenes, and more detail in general. All while being released under a permissive, open-source license. Let’s take a look at what it offers.

More Realistic

Kandinsky 2.1 is a very creative model that produces interesting art. Although it can produce realistic images, it is not its strong suit. Kandinsky 2.2 takes a similar approach to Midjourney v5 and goes more towards realism. It is still capable of producing creative images, but if you give no direction, it tends to produce realistic images by default.

Here is example with Kandinsky 2.1 on the left, and Kandinsky 2.2 on the right. Notice how 2.1 produces an almost painting-like image, while 2.2 produces a more realistic image when there is no mention of a style.

“A cat on a boat”

Here is another example with specific direction regarding realism. Aside from the obvious resolution difference, notice how 2.2 is able to create more realistic details, lighting, cloth texture and skin texture.

“Artemis as a black woman, 90mm studio photo, hyperrealistic”

Higher Resolution

Kandinsky 2.1 was trained on 768x768 pixel images. Because of this, if you went too wider or taller, say 1280 pixel on one side, it would produce repeating details, double heads etc.

Kandinsky 2.2 on the other hand, is optimized to produce 1024x1024 pixel images.

New version supports a wide range of aspect ratios without any doubling problems just like the old one which means you can go far wider or taller than 1024 pixels on one side.

Examples #1

More Detail, Wider Scenes

Kandinsky 2.2 is significantly better at fine details compared to 2.1 and seems to be favoring wider scenes in general. Kandinsky 2.1 is able to produce fine details on some scenes, especially close-ups, but it struggles when the shot gets wider or there is a need for too many fine details.

Examples #2

ControlNet Support

Kandinsky 2.2 introduced support for much loved ControlNet. Although this isn’t currently available on Stablecog, it’ll be soon. You can check out HuggingFace’s docs to learn how to use ControlNet with Kandinsky 2.2.

Below is an example of what it can achieve, from HuggingFace’s docs. You give the image on the left, and a prompt. Then in this exmaple, ControlNet creates a depth map to create a better image to image generation. Finally, it produces the image on the right.

“A robot, 4k photo”

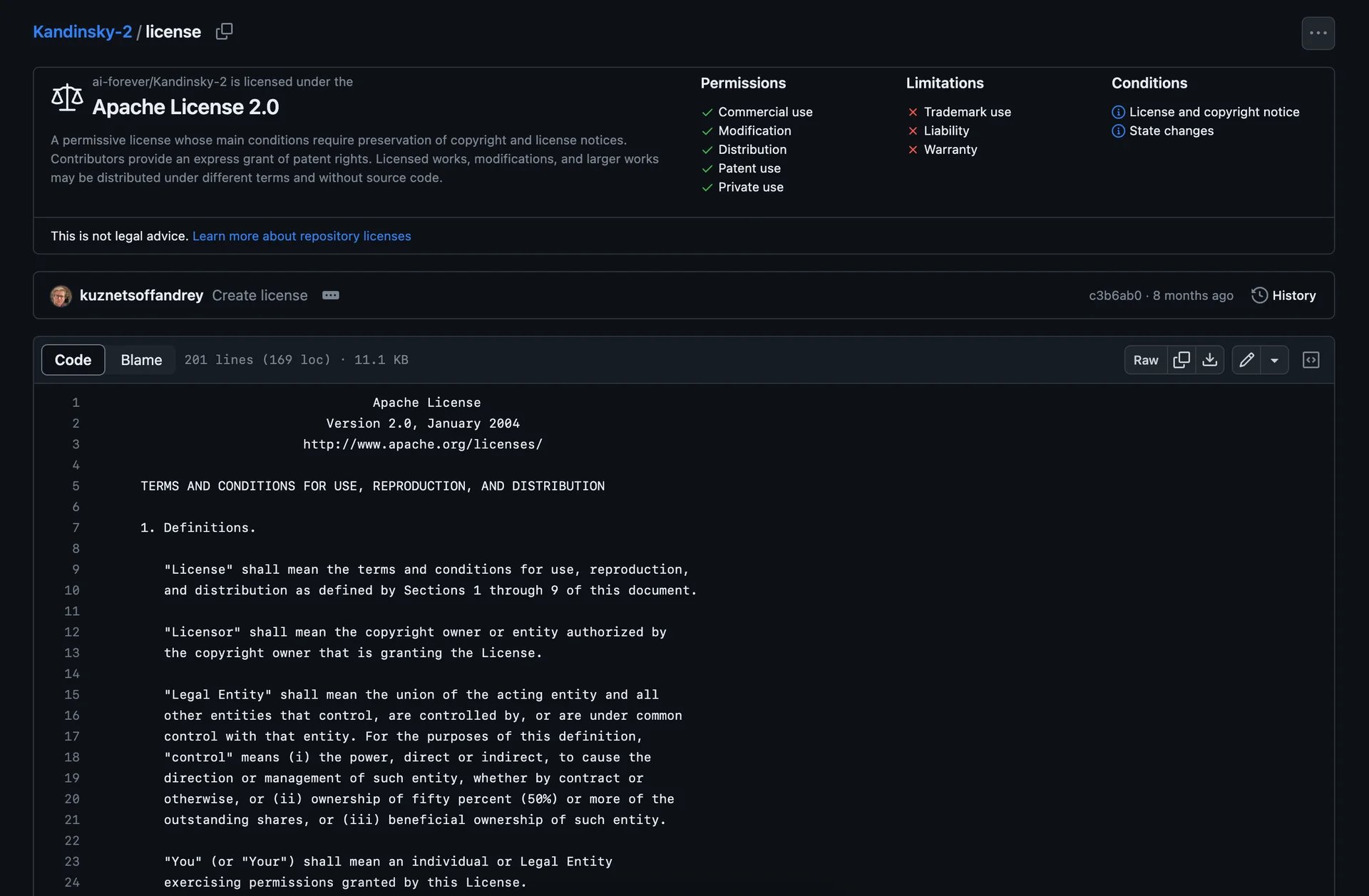

Permissive License

Lately, some open-source models have been switching to non-permissive, research only licenses. Some with unknown permissive license release schedules, some with no promise of a permissive license in the future. This means that you can’t use those models for commercial purposes, such as a website of yours you are selling products on. That also means we can’t host them on Stablecog. Two of the latests examples being DeepFloyd’s IF model and SDXL which is promised to be release under a permissive license in the future.

Kandinsky 2.2, just like 2.1, takes a different approach. It is released under a permissive license from the get go. This means that you can use it for commercial purposes, and we can host it right away on Stablecog.

Conclusion

Considering the improvements it offers in the realism department, a higher base resolution, significantly better fine detail, ControlNet support, and the lack of recent competition with permissive licenses, we think Kandinsky 2.2 is the best open-source AI image model currently available with a permissive license. Because of this, we are making it the default model on Stablecog going forward.

Give It A Try

You can try Kandinsky 2.2 on Stablecog right now! Just click the button below and see what it can do.